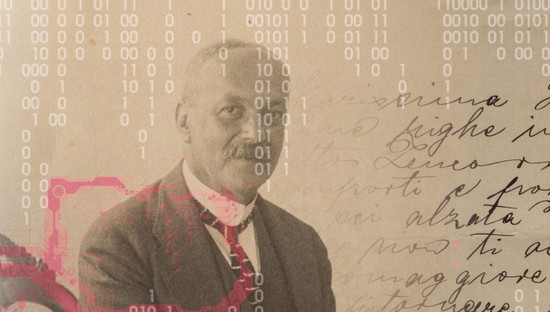

Gabriele Sarti

Postdoc in LLM Interpretability

Khoury College of Computer Sciences, Northeastern University

Welcome to my website! 👋 I am a postdoc at the BauLab at Northeastern University, working on interpretability interfaces and white-box methods for the evaluations ecosystem as part of the National Deep Inference Fabric (NDIF).

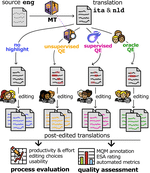

Previously, I was a PhD student at the University of Groningen, where I completed my thesis on actionable interpretability for machine translation as a member of the InCLoW team, the GroNLP group and the Dutch InDeep consortium. Before that, I was a applied scientist intern at Amazon Translate NYC and a research scientist at Aindo.

My current research interests include LLM reasoning, interpretability, user modeling and monitoring of agentic systems. I’m especially interested in making white-box auditing a practical part of how we evaluate frontier AI, since behavioural tests fail to surface unverbalized behaviors, and are increasingly inadequate as models get more capable. I work on ways to surface and steer the beliefs, goals, and plans behind what an agent does, and on the open infrastructure that links interpretability tools to the evaluation ecosystem. If you’re excited about these topics, shoot me a message!

Your (anonymous) constructive feedback is always welcome! 🙂

Interests

- Reasoning Language Models

- Mechanistic Interpretability

- User Modeling and Personalization

- Alignment Auditing for Agents

Education

-

PhD in Natural Language Processing

University of Groningen (NL), 2021 - 2025

-

MSc. in Data Science and Scientific Computing

University of Trieste & SISSA (IT), 2018 - 2020

Experience

-

Postdoctoral Researcher

Northeastern University (US), 2026 -

-

Applied Scientist Intern

Amazon Web Services (US), 2022

-

Research Scientist

Aindo (IT), 2020 - 2021